At some point in the last 18 months, AI coding has become an integral part of real development operations. According to Stack Overflow:

- 46% of code on GitHub Copilot is now AI-generated

- 90% of Fortune 100 companies use Copilot/Git Hub

- 84% of all developers globally use AI coding tools

- $30.1B projected market size by 2032 (up from $4.91B in 2024)

Development has gotten 10x faster, but testing isn’t.

This speed, however, comes with a catch most teams aren’t openly talking about.

When a developer produces code using AI, they often miss the small details. A vibe-coded feature might touch three services; it might happen to alter a data model that is missed while “skim review”. In most cases like this, the output looks reasonable and it ships.

Then the endless line of “bugs” starts coming in.

Blind Approval of AI-Generated Code

When the pressure is high, developers focus on shipping fast and that’s when quality often gets overlooked. The “State of AI Coding 2025” report recorded a 76% increase in output per developer. At the same time, the average size of a Pull Request increased by 33%. And this also creates a new visibility problem inside development teams.

The risk is not always visible immediately. What if the AI assumed something incorrect about a business rule? What if it changed logic in a way that only surfaces when a customer hits a specific edge case two weeks after release? At least 48% of AI-generated code contains security vulnerabilities. Research on GitHub Copilot found that 40% of generated code was flagged for insecure patterns.

AI writes code based on patterns, not a full understanding of the system, so small but important issues can be missed during reviews.

And what I’m seeing right now in the “AI coding boom” is exciting. But it’s also setting up a failure mode that the industry isn’t talking about loudly enough.

1. The Velocity Problem Nobody Is Talking About Loudly Enough

The development cycle has been turbocharged. And QA? QA is still running on the same fuel it was using two years ago.

Your developer is shipping a feature from sprint 16. In Sprint 20, a button moves or an API field gets renamed. Tests break. To fix the old test, you need to reload the context, what was the original intent, what changed, and how does it affect the rest of the suite. The result is a growing velocity mismatch, where the gap between development output and QA capacity widens with every release. This leads to an exponential growth in the testing backlog, an accumulation of quality debt, and an increased risk of shipping bugs to production.

2. Incomplete Automation Testing, Due to Confusing Scenarios

How testing usually works. The automated tests get built on top of incomplete, outdated information.

The data reflects this. According to a survey found on Stack Overflow, 88% of participants reported being less than confident in implementing AI-generated code. A GitLab survey found that 29% of teams had to roll back releases because of errors that slipped through. And underneath all of this is a problem nobody talks about openly: there is no single source of truth for what is actually tested. Nobody has a clear, current record of which tests are automated, which are still manual, and which exist only in someone’s head.

- The manual tester assumes automation covers it.

- The automation engineer assumes it’s still manually checked.

Critical flows can be missed because everyone thinks someone else is testing it.

3. Brittle tests break every time the UI changes

A developer makes a small structural change, and suddenly ten tests fail because the test is pointing at something that no longer exists.

As the number of tests grows, the maintenance burden grows faster. Teams find themselves spending more time fixing broken tests than writing new ones.

The World Quality Report 2025-26 found that 50% of QA leaders cite “maintenance burden and unstable scripts” as their primary challenge with test automation.

If your team is spending most of its time keeping existing tests alive rather than expanding coverage, your automation investment is working against you.

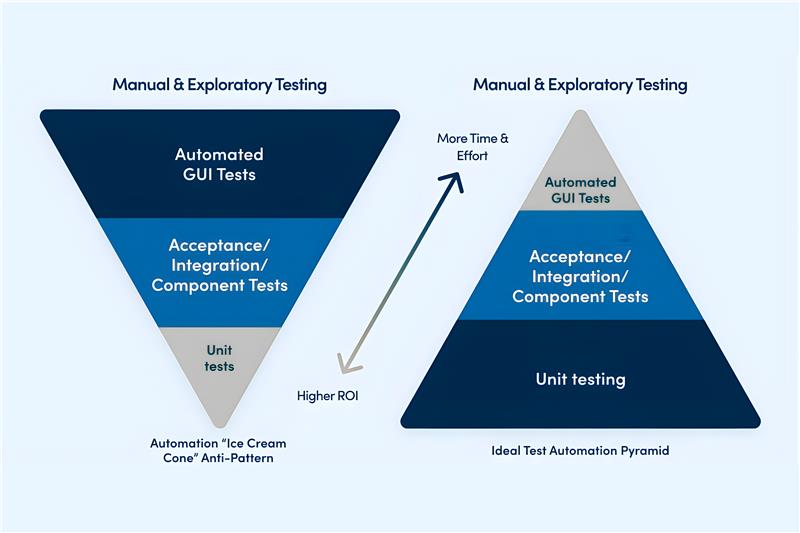

Reimagining the Testing Pyramid for AI-Driven Development

The way teams think about testing is being turned upside down. And it needs to be.

When code can be generated at speed, the real challenge is no longer writing it. It is verifying that what was built actually does what was intended.

This shifts the weight of quality assurance toward tests that check how the entire system behaves end-to-end, not just individual pieces in isolation. And those tests need to keep up with a product that is changing faster than ever.

“In a world where coding approaches zero cost, the real value moves from writing code to validating intent.”

– Khurram Javed Mir – Founder Kualitee

In practice, teams using AI-assisted development tools are generating code faster than any traditional testing approach can validate. Checking individual units of code, even when that checking is also AI-generated, cannot keep pace with the volume or the complexity.

The layers that matter most now are the ones that verify how everything works together. How one part of the system talks to another. Whether the full user journey behaves correctly. And critically, whether the tests themselves can adapt when the product changes, without someone manually going in to fix them.

This is where a new approach is emerging. Rather than one large, rigid test suite that a team maintains by hand, purpose-built AI testing systems are beginning to deploy specialized agents, each with a distinct job: generating tests, running them, identifying what broke and why, and finding gaps in coverage.

Teams working with this model are reporting faster release cycles without the corresponding rise in production defects.

The shift is from a test suite you maintain to a quality system that evolves with your product.

5 Steps to Rethink Your Automation Testing Strategy

#1. Treat AI-Generated Code as Its Own Risk Category

Flag pull requests where AI-generated contributions exceed a defined threshold. Apply additional scrutiny to business-critical workflows, authentication flows, and third-party integrations.

A 2025 TechRadar report found that many developers do not consistently review AI-generated code, creating what researchers are calling “verification debt.”

One Fortune 500 retailer has already operationalized this. AI contribution percentage is now a required field in every code submission, and anything exceeding 30% automatically triggers an enhanced review process.

The precedent is being set. The question is whether your organization gets ahead of it or responds to an incident that forces the conversation.

#2. Focus on Test Execution, Not Just Test Creation

As AI generates tests at scale, the bottleneck shifts. The problem is no longer creating tests. It is running them reliably.

Test environments need to be consistent, isolated, and reflective of what is actually in production. Each release should trigger its own clean, temporary environment. If your infrastructure cannot run tests simultaneously at scale, your automation is adding time to every release cycle, not removing it.

Organizations that have integrated AI-driven testing directly into their release pipelines, with parallel execution and automatic adaptation to product changes, are reporting 35% faster regression cycles and up to 45% improvement in defect detection rates.

Leaders Move: Focus on execution because this time, “quality assurance” stops being the function that slows releases down. It becomes the function that makes faster releases possible.

#3. Centralize your defect intelligence.

AI-generated code introduces a new category of defects. Logic that looks correct but does not match how your system actually works. References to things that do not exist. Managing this reactively, ticket by ticket, is not sustainable at the pace AI-assisted development moves.

What is needed is a system that identifies patterns across releases, flags recurring issues before they compound, and connects defects directly to the tests designed to catch them, not just to the tickets raised after the fact.

When code is being generated at speed, defect visibility becomes a business continuity issue. Teams that are making release decisions without knowing whether the same class of problem has surfaced three sprints in a row.

Test management that connects execution results to defect trends gives leadership the visibility to act early, before a recurring issue reaches production and becomes a customer problem.

That said, here’s how Kualitee compares with other QA management tools.

#4. QA Shared Responsibility in Dev Teams

If QA only gets involved after development, you lose early feedback loops that catch issues closer to the source.

To keep up with faster development cycles, QA must be integrated into the development process itself:

- Testing cannot remain a stage that happens after development finishes. It needs to run alongside it.

- Developers need immediate feedback on every commit.

- Defect trends, coverage gaps, and release risks need to be visible to the entire team, not buried in a QA backlog that only one function monitors.

When quality becomes a shared operational responsibility rather than a handoff, it stops slowing down releases. It becomes the infrastructure that makes faster releases safe.

#5. Build prompt regression suites for AI-native components.

When your product includes AI-generated features, traditional testing is not enough. AI behavior can shift over time or produce unpredictable outputs, and standard test suites are not designed to catch that.

Managing this requires a structured process:

- Defining what good outputs look like

- Testing prompts the way you test code

- Scoring confidence levels, validating that outputs stay within acceptable boundaries, and monitoring continuously as the underlying models evolve.

Teams that skip this are exposed to a specific category of production risk: behavior that was working last month is no longer working, with no system in place to detect it until a customer does.

As AI becomes embedded in core product logic, the reliability of that logic becomes a business risk, not just a technical one.

The Positive Outlook for QA teams

Writing code has been the most difficult step in the validation issues the tech industry used to have when creating code.New AI features are rolling out so quickly that people hardly have a chance to test them first. As soon as people place all their trust in algorithms for coding, it’s more difficult to keep control of its underlying logic.

QA engineers are not script writers. They never should have been positioned that way. They are the people in the room who understand how systems fail under real conditions and that judgment is now more valuable, not less.

The QA professionals who will thrive are the ones who evolve from execution management to quality architecture.

Defining what correctness looks like for AI-generated components. Designing the guardrails that autonomous test agents operate within. Owning the traceability model that connects business intent to test outcomes at enterprise scale.

The Outlook Is Clear, Even If the Path Isn’t

This is not a prediction for 2030. This shift is happening now.

According to DevOpsBay 2026, 70% of DevOps-driven organizations are expected to run shift-left and shift-right together as a combined quality model. 40% of large enterprises will have AI assistants embedded directly in their CI/CD pipelines selecting tests, analyzing logs, triggering rollbacks.

The organizations that treat quality engineering as a strategic function, not a release gate, will be the ones that scale AI-assisted development without compounding technical debt and production risk.

The AI coding boom is here. It was always going to arrive. The teams that understood testing would have to evolve alongside it are already ahead.

The teams that haven’t started yet are not out of time. But the window is shorter than the sprint cadence might suggest.

If you’re looking for expert support with automation testing services, Kualitatem can be helpful. As a TMMi Level 5 certified company, this company has partnered with leading organizations like Google and Microsoft to deliver high-quality QA automation that scales with modern development.